Your landing page is doing okay. Conversion sits somewhere between 2% and 4% and you've convinced yourself that's fine. But the last time you changed anything — the headline, the CTA, the hero image — you did it based on gut feel, shipped it, and watched the conversion number move slightly in a direction you couldn't fully explain. That's not optimisation. That's decoration. Real landing page A/B testing is a systematic process: generate meaningful variations, run statistically valid tests, read the results correctly, and iterate. AI changes the first part of that equation dramatically — you can now generate 10 credible headline variations in five minutes instead of five days. Here's how to build the full system.

💡 TL;DR

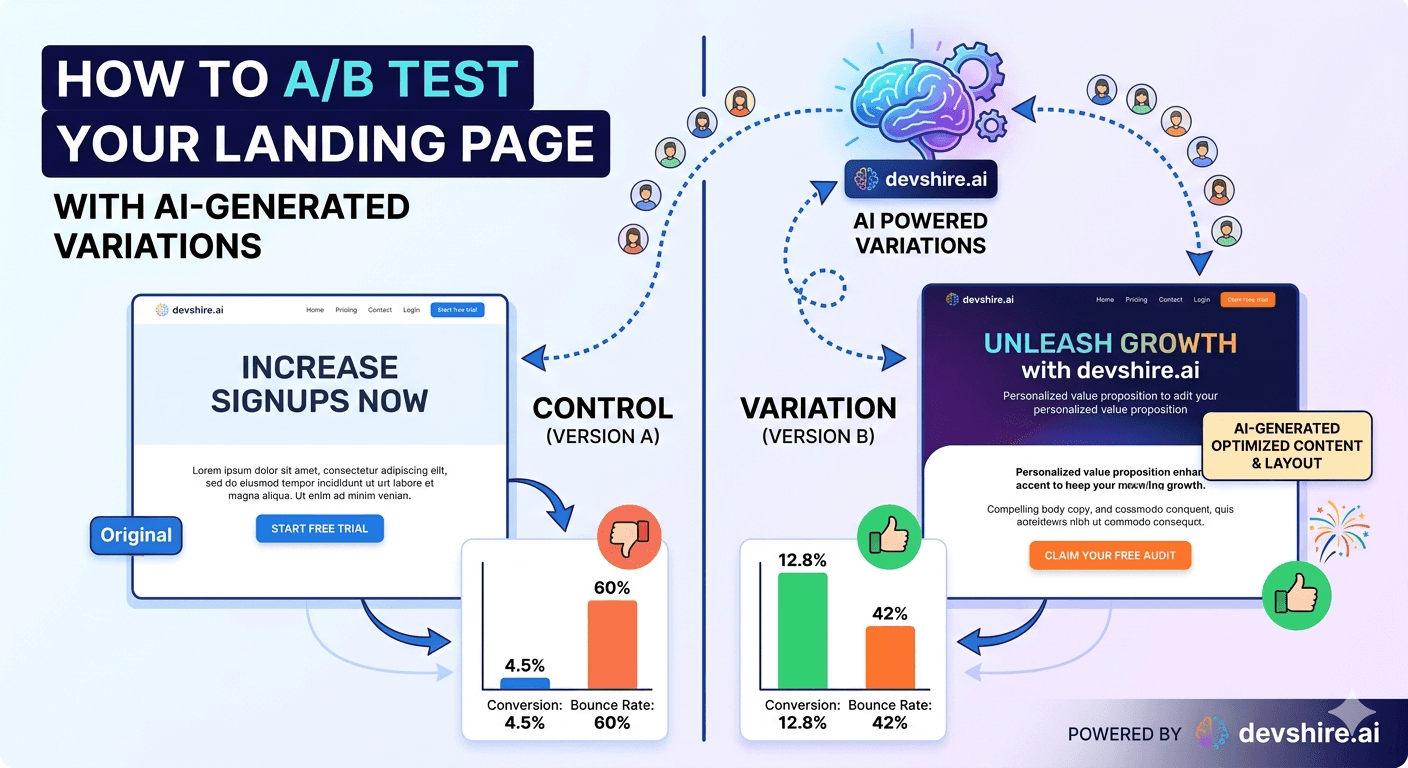

AI dramatically speeds up variation generation — the part of A/B testing that used to take weeks. But test design and statistical validity still require the same rigour as always. Use AI to generate 5–10 copy or layout variations fast, pick 2–3 to test properly, run for a minimum of 2 weeks with enough traffic to reach significance, and act on results only when sample size justifies it. AI helps you test more things, not skip the testing.

Why Most Landing Page A/B Tests Fail Before They Start

The problem isn't that teams don't test. It's that they design tests that can't produce meaningful results. Three failure modes kill most A/B programmes before they get useful data.

❌ Testing too many variables at once

You change the headline, the CTA text, the button colour, and the hero image in the same test. Conversion changes. You have no idea why. A/B tests should isolate one variable. If you want to test multiple changes simultaneously, run a multivariate test with enough traffic to support it — which most SaaS landing pages don't have.

❌ Calling tests too early

You've had 200 visitors across two variants and one is converting at 4.2% vs 3.8%. You call it for the 4.2% version. That's a coin flip, not a result. Statistical significance at 95% confidence typically requires 1,000+ conversions per variant for small difference detection. Running tests for less than two weeks also exposes you to day-of-week traffic patterns.

❌ Testing trivial changes

Button colour tests are famous in conversion optimisation circles for producing tiny, unreproducible results. Test things that could actually move the needle: your headline value proposition, your social proof format, your CTA framing, or your hero section structure. If a change couldn't plausibly move conversion by 10%+, it's probably not worth a test slot.

Where AI Actually Helps: Generating Meaningful Variations Fast

The part of A/B testing that AI genuinely accelerates is variation generation. Writing 8 credible headline variants used to take a copywriter a day. With a well-prompted AI, you can generate 20 variations in 10 minutes, evaluate them for strategic fit, and pick the 2–3 worth testing — all before your first morning coffee.

✍️ Headline variation generation

Give AI your current headline, your target customer, your core value proposition, and your competitors' positioning. Ask for 10 variations that each lead with a different angle: outcome-focused, pain-focused, speed-focused, proof-focused, contrarian. You'll get 10 credible directions in minutes instead of days.

🎯 CTA copy variations

"Get started" is the most common CTA on the internet and the least persuasive. Ask AI to generate 10 alternative CTAs that specify the outcome ("Start building your first pipeline"), reduce friction ("See it in 2 minutes"), or use social proof ("Join 3,400 teams already using it"). Test 2–3 of these against your current CTA.

🧩 Social proof framing

AI can rewrite the same testimonials in different formats: long-form quote, short punchy excerpt, stat-focused, outcome-focused. Test whether your social proof converts better as a quote wall, a single prominent testimonial, or a stats bar. The content is the same — the framing is the variable.

[INTERNAL LINK: AI tools for landing pages → devshire.ai/blog/ai-tools-marketers-build-landing-pages-faster]

The Tooling Stack: What to Use for Landing Page A/B Tests

You don't need expensive experimentation platforms to run valid tests. Here's what actually works at different stages.

Tool | Best for | Monthly cost | Limitation |

|---|---|---|---|

Google Optimize (sunsetting — use alternatives) | Legacy setups only | Free | Discontinued — migrate away |

VWO | Non-technical teams, visual editor | $200–$500+ | Expensive at scale |

Optimizely | Enterprise, complex experiments | $1,000+ | Overkill for most SaaS |

Split.io | Developer-led feature flag testing | $0–$350 | Requires dev setup |

Custom (PostHog + feature flags) | Product-integrated experimentation | $0–$450 | Dev time to build |

Unbounce A/B | Standalone landing pages | $99–$200 | Only works on Unbounce pages |

For most early-stage SaaS: PostHog's free plan with feature flags is the best place to start. It's product-native, free up to reasonable scale, and the feature flag system handles A/B routing cleanly. If you're running standalone landing pages rather than in-product tests, Unbounce's A/B feature is the simplest option.

Designing a Test That Actually Produces Valid Results

Running a technically valid A/B test requires decisions that most guides skip over. Here's the checklist that makes results trustworthy.

📐 Calculate your required sample size before you start

Use a sample size calculator (Evan Miller's is the standard) with your current conversion rate, the minimum detectable effect you care about (usually 10–20%), and 95% confidence. If your landing page gets 500 visitors per month and you need 4,000 visitors per variant, that's an 8-month test. Either find a way to increase traffic or accept lower confidence levels.

📅 Run for at least 2 full weeks minimum

Week-on-week traffic patterns mean a test run Monday to Friday sees different user behaviour than one run Monday to Sunday. Run every test for at least two complete week cycles to eliminate day-of-week effects. Business SaaS tools often see dramatically different Friday vs Tuesday behaviour.

🎯 Define your primary metric before the test starts

Pick one metric that defines the winner: signup conversion rate, trial-to-paid conversion, or time-on-page. Do not switch metrics mid-test because you don't like the results. This is p-hacking and it produces false conclusions. The primary metric is decided before the test runs.

🚦 Don't peek at results until the test is complete

Looking at results daily and stopping when you see significance produces false positives about 30% of the time. Set the end date before you start. Check results once. Act on them once. Looking early and stopping early is one of the most common sources of incorrect A/B test conclusions.

Trusted by 500+ startups & agencies

"Hired in 2 hours. First sprint done in 3 days."

Michael L. · Marketing Director

"Way faster than any agency we've used."

Sophia M. · Content Strategist

"1 AI dev replaced our 3-person team cost."

Chris M. · Digital Marketing

Join 500+ teams building 3× faster with Devshire

1 AI-powered senior developer delivers the output of 3 traditional engineers — at 40% of the cost. Hire in under 24 hours.

How to Read Results Without Fooling Yourself

A test result is only useful if you interpret it correctly. Here's what actually matters when you're reading the numbers.

📊 Statistical significance ≠ practical significance

A headline change that lifts conversion from 3.0% to 3.1% might reach 95% statistical significance with enough traffic — but a 0.1% absolute increase isn't worth shipping. Ask: is the absolute lift meaningful for the business? A 10% relative lift on a 3% baseline (from 3.0% to 3.3%) starts to become interesting.

🔁 Replicate before you conclude

A single test is a hypothesis, not a fact. If a headline variation wins by 15%, roll it out — but schedule a replication test three months later to confirm the lift holds. Landing page conversion is affected by traffic source mix, seasonality, and product changes. A one-time win might not be a durable win.

🧠 Negative results are still results

A test where the control wins is telling you something — your variation was wrong, but you've ruled out a hypothesis and learned something about what your audience doesn't respond to. Negative results should be documented just as rigorously as positive ones. Teams that only record wins end up re-testing the same failed ideas.

[INTERNAL LINK: programmatic SEO for SaaS → devshire.ai/blog/build-programmatic-seo-strategy-b2b-saas]

The Bottom Line

AI accelerates variation generation — use it to produce 10–20 headline, CTA, or social proof variations in minutes. Then pick 2–3 to test properly. It doesn't replace the testing; it just removes the bottleneck of writing variations.

Test one variable at a time. Changing multiple elements in the same test makes results uninterpretable.

Calculate required sample size before you start. If your traffic can't support a valid test in a reasonable timeframe, don't run the test — or accept that you need lower confidence thresholds.

Run every test for a minimum of two complete weeks to account for day-of-week traffic variation.

Define your primary metric before launch. Don't switch metrics mid-test because you don't like what you're seeing.

Statistical significance at 95% with a small absolute lift is not automatically worth acting on. Look for practical significance, not just statistical.

Document negative results. They're half the value of a testing programme — they tell you what your audience doesn't respond to.

Frequently Asked Questions

How many visitors do I need to run a valid landing page A/B test?

It depends on your current conversion rate and the minimum effect size you want to detect. A rough rule: if you want to detect a 10% relative lift (e.g. from 3.0% to 3.3%) at 95% confidence, you typically need 5,000–10,000 visitors per variant. Use Evan Miller's sample size calculator to get the precise number for your baseline. If your traffic can't support that, consider 80% confidence or focus on larger, more impactful changes.

Can AI generate landing page variations that actually convert better?

AI can generate credible, strategically varied copy and structure quickly — but it can't predict which variation will win without running an actual test. What AI does well: generating multiple distinct angles (outcome-focused, pain-focused, speed-focused) fast. What AI can't do: replace the statistical test that determines which angle actually resonates with your specific audience. Use AI to generate variations; use testing to find the winner.

What's the best free tool for A/B testing a landing page?

For product pages, PostHog's free plan with feature flags handles A/B routing well and integrates natively with your analytics. For standalone marketing landing pages, Unbounce has a built-in A/B testing feature. Google Optimize has been discontinued — if you're still using it, migrate to one of these alternatives. VWO and Optimizely are strong options but expensive for early-stage teams.

How long should I run an A/B test?

At minimum, two full calendar weeks — regardless of whether you've hit your sample size target. This accounts for day-of-week traffic variation that could skew results if you stop mid-week. If your calculated sample size requires longer than two weeks, run for the full required period. Don't stop a test early because one variant looks like it's winning — early peaks are common and often don't hold.

What elements of a landing page are worth A/B testing?

In order of typical impact: headline value proposition, primary CTA text and placement, hero section layout (text-left vs video vs screenshot), social proof format and placement, pricing presentation, and form length. Avoid testing button colours or font choices as your primary test — the effect sizes are usually too small to detect reliably and too small to matter even if you do.

How do I use AI to write landing page A/B test variations?

Give the AI your current headline, your target customer profile, your core value proposition, and what makes you different from competitors. Ask it to generate 10 headline variations each leading with a different angle — outcome, pain point, speed, proof, contrarian. Evaluate each for strategic fit with your positioning, then pick 2–3 to test. Do the same for CTA copy, subheadlines, and social proof framing.

What's the difference between A/B testing and multivariate testing for landing pages?

A/B testing changes one variable and compares two versions — simpler, requires less traffic, produces clearer conclusions. Multivariate testing changes multiple elements simultaneously and tests all combinations — more powerful but requires significantly more traffic and is harder to interpret. For most SaaS landing pages, A/B testing is the right approach. Multivariate testing only makes sense with 50,000+ monthly visitors.

Need a Developer to Build Your Experimentation Stack?

devshire.ai matches SaaS teams with developers who've implemented A/B testing infrastructure, feature flag systems, and analytics pipelines that make experimentation fast and trustworthy. Get a shortlist in 48–72 hours.

Start Your Search at devshire.ai →

No upfront cost · Shortlist in 48–72 hrs · Freelance & full-time · Stack-matched candidates

About devshire.ai — devshire.ai matches AI-powered engineering talent with product teams that move fast. Every developer has passed a live proficiency screen. Typical time-to-hire: 8–12 days. Start hiring →

Related reading: How AI Tools Help Marketers Build Landing Pages 5x Faster · How to Build a Programmatic SEO Strategy for a B2B SaaS Site · Best AI SEO Tools for SaaS Startups in 2026 · Using AI to Automate Email Sequences That Actually Convert · How to Build a Growth Dashboard Your Whole Team Can Use

Devshire Team

San Francisco · Responds in <2 hours

Hire your first AI developer — this week

Book a free 30-minute call. We'll match you with the right developer for your project and get you started within 24 hours.

<24h

Time to hire

3×

Faster builds

40%

Cost saved