A 4-person SaaS team shipping a legal document review product added AI-powered clause extraction in 11 days. No machine learning engineers. No model training. No GPU infrastructure. They used the Claude API with a carefully designed prompt, a simple preprocessing step, and a feedback loop that improved accuracy over the first 30 days. The result: a feature that reduced manual review time by 70% for their customers and became the primary reason new users converted from trial.

💡 TL;DR

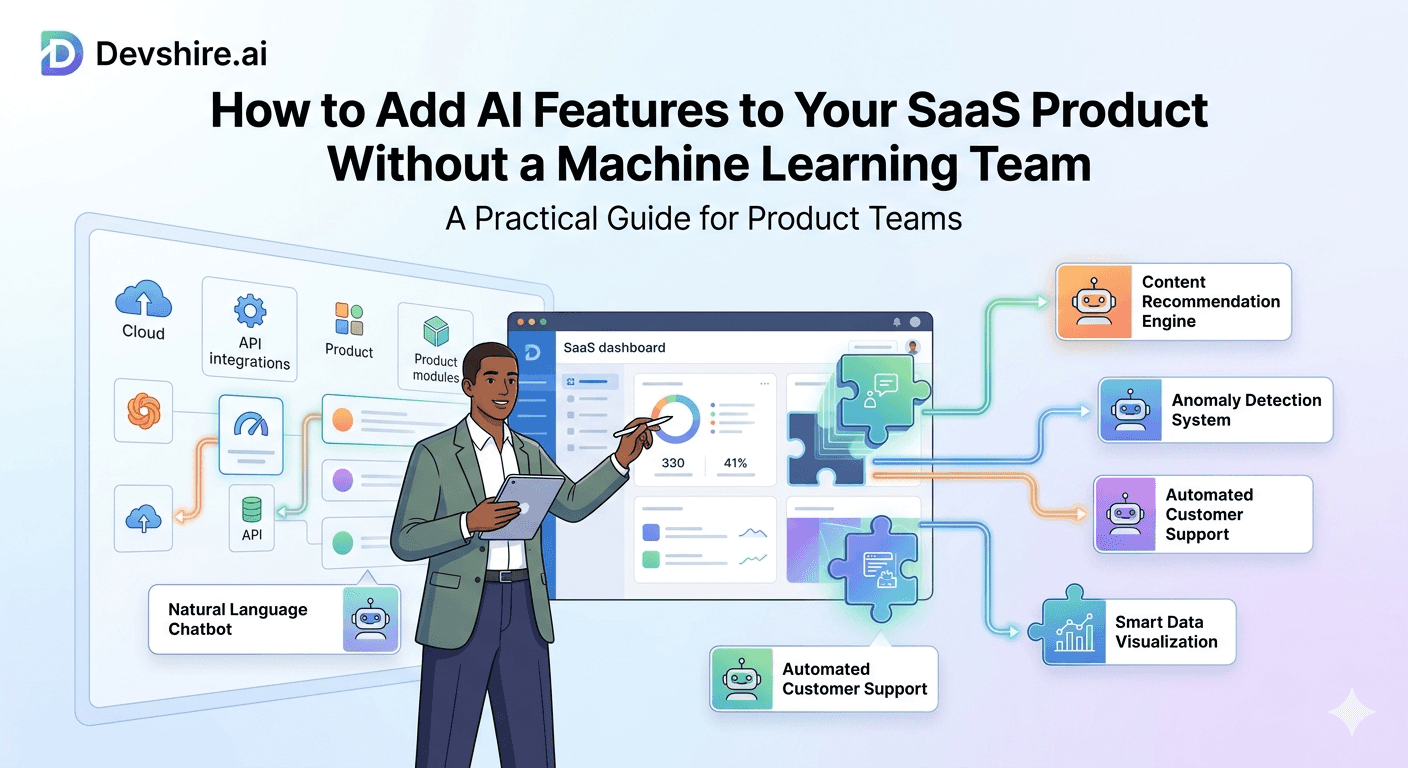

You don't need a machine learning team to add AI features to your SaaS. In 2026, the gap between "calling an AI API" and "building a custom model" covers 95% of what SaaS products actually need. Start with Claude API, OpenAI, or Gemini for language tasks. Use Pinecone or Supabase pgvector for semantic search. Use Replicate for image or audio features. Most AI features in SaaS products ship in 1–3 weeks using this stack. The biggest mistake is treating AI as an infrastructure problem when it's actually a product design problem.

What Your SaaS Actually Needs From AI — vs What You Think It Needs

Most product teams overestimate the infrastructure required to add AI features to SaaS. They imagine GPU clusters, training pipelines, and data science teams. That's building a frontier model. It has nothing to do with adding AI features to a product.

Here's the honest breakdown of what AI features in SaaS products actually require:

Feature Type | What You Actually Need | Time to Ship | Infrastructure Cost |

|---|---|---|---|

Content generation | Claude or GPT-4o API call | 2–5 days | $0.01–$0.10 per generation |

Document summarisation | API call with document as context | 1–3 days | $0.002–$0.05 per doc |

Semantic search | Embeddings API + vector DB | 1–2 weeks | $20–$100/month at early scale |

Data classification | Structured output API call | 3–7 days | $0.001–$0.02 per classification |

Image analysis | GPT-4o Vision or Claude vision API | 2–5 days | $0.01–$0.15 per image |

Speech to text | Whisper API (OpenAI) or Deepgram | 1–3 days | $0.006 per minute (Whisper) |

Custom ML model | ML team, training data, GPU infra | 3–9 months | $50,000+ to start |

The custom ML model row is almost never the right choice for features in the rows above it. If you need speech to text, Whisper costs $0.006 per minute. Training a custom speech model from scratch costs months and six figures. The API wins. Every time.

Building the AI API Layer — The Pattern That Works

The most common mistake when teams add AI features to SaaS is calling the AI API directly from the frontend. Don't do this. Your API key ends up in client-side code, you lose control over rate limiting, and you can't add caching, logging, or input validation. Build a thin AI service layer on your backend instead.

This layer gives you one place to add caching (cache identical summaries for 24 hours), rate limiting per user, cost tracking per organisation, and error handling. Every AI feature goes through this layer. Not through direct API calls from your routes.

Structured Output — The Feature Most Teams Skip

Ask an AI model for a list of items and you get inconsistently formatted text. Use structured output (JSON mode) and you get a predictable data structure you can render directly in your UI. This is the difference between an AI feature that ships in 3 days and one that takes 3 weeks of output parsing work.

[INTERNAL LINK: Claude API integration guide → claude-api-saas-integration]

Semantic Search — The AI Feature With the Highest ROI

If your SaaS has any content — documents, notes, emails, conversations, records — semantic search is the highest-ROI AI feature you can add. Users type a natural language question and find relevant content even if the exact words don't match. It's the difference between keyword search (find me docs containing "NDA") and intent search (find me all agreements with confidentiality clauses).

How to Build It Without a Vector Database Specialist

If you're already on Supabase, pgvector is free and handles most early-stage SaaS workloads without a separate vector database service. For larger scale or more advanced retrieval, Pinecone starts at $0 for up to 100,000 vectors and $70/month for production use.

Enable pgvector on your Postgres instance. One SQL command:

CREATE EXTENSION vector;. Add a vector column to your content table.Generate embeddings on content write. Call OpenAI's text-embedding-3-small model ($0.00002 per 1,000 tokens) or Anthropic's embedding endpoint every time content is saved. Store the embedding vector in your database.

Query by similarity on search. Convert the user's search query to an embedding, then run a cosine similarity query against your stored vectors. Return the top 5–10 most similar results.

Optional: re-rank with LLM. Pass the top results to Claude with the user's question. Ask it to rank them by relevance and explain why. This adds 200–400ms and $0.005–$0.02 per search but dramatically improves result quality for complex queries.

A recruitment SaaS team added semantic search across candidate profiles in 9 days using this exact pattern. Before: recruiters used keyword filters and missed 40% of relevant candidates. After: natural language queries against the full candidate database with relevance ranking. Recruiter productivity increased by 35% in the first month.

Three AI Feature Mistakes That Blow Up in Production

Fair warning — these are the mistakes we see most often when teams ship AI features without thinking through the edge cases.

❌ Sending user data to AI APIs without a privacy review

This drives me crazy because it's so easy to avoid. Before sending any customer data to an external AI API, check whether your terms of service permit it, whether your AI provider's data processing agreement covers your compliance requirements, and whether GDPR or HIPAA applies to that data. A startup sending customer healthcare records to a third-party AI API without a data processing agreement is a regulatory incident waiting to happen.

❌ No cost controls on AI API usage

Without per-user rate limits and spending caps, a single automated user can generate $3,000 in AI API costs in a weekend. Set spending limits at the API provider level and per-user rate limits at your application level. Track AI cost per customer. Know which customers are profitable and which are costing more to serve than they pay.

❌ Treating AI output as reliable without validation

AI models hallucinate. For features where accuracy matters — legal clause extraction, financial data summarisation, medical record parsing — you need a human review step or a confidence threshold below which results are flagged. Never show unvalidated AI output in a context where the user might act on it without reviewing it first.

[INTERNAL LINK: SaaS security best practices → saas-security-best-practices]

Trusted by 500+ startups & agencies

"Hired in 2 hours. First sprint done in 3 days."

Michael L. · Marketing Director

"Way faster than any agency we've used."

Sophia M. · Content Strategist

"1 AI dev replaced our 3-person team cost."

Chris M. · Digital Marketing

Join 500+ teams building 3× faster with Devshire

1 AI-powered senior developer delivers the output of 3 traditional engineers — at 40% of the cost. Hire in under 24 hours.

Five AI Features Worth Building — With Honest Build Estimates

These are the features that have moved metrics for SaaS products we've seen build them in 2025–2026. Real build times from teams of 2–4 developers.

🚀 Smart email draft assist (5–8 days)

User writes a short note, AI expands it into a full professional email in their tone. Implementation: Claude API with a user's previous emails as few-shot examples. The tone matching is the hard part — solve it with 3–5 example emails from the user's own history as context. Conversion impact: features like this increase daily active usage by 20–35% in productivity SaaS products.

🚀 Automated report generation (1–2 weeks)

Pull data from your database, structure it as context, ask the AI to write a narrative summary. The hard work is the data pipeline, not the AI call. Most teams spend 80% of the build time on data preparation and 20% on the AI integration. Budget accordingly.

🚀 Intelligent onboarding assistant (1–2 weeks)

A chat interface that answers product questions using your documentation as context. Implementation: embed your docs, run similarity search on user questions, pass relevant docs to Claude as context, return an answer. This is Retrieval Augmented Generation (RAG) — don't let the acronym intimidate you. It's embeddings plus similarity search plus an API call.

🚀 Anomaly detection in data (2–3 weeks)

Send recent data points to the AI with context about what normal looks like. Ask it to identify anomalies and explain them. This is genuinely useful for analytics, monitoring, and financial SaaS. Works well with Claude's long context window — you can send 60–90 days of data in a single prompt.

🚀 Customer health scoring (1–2 weeks)

Feed usage data, support ticket history, and payment history into a structured prompt. Ask for a health score with reasoning. Then use the reasoning to power proactive CSM outreach. This is a feature that pays for itself — teams using AI health scoring report 15–25% improvement in churn prediction accuracy compared to rule-based scoring.

[EXTERNAL LINK: OpenAI API pricing → platform.openai.com/pricing]

The Bottom Line

You don't need a machine learning team to add AI features to SaaS. The Claude API, OpenAI, and Whisper cover 95% of what SaaS products actually need — no model training required.

Build a thin AI service layer on your backend. Never call AI APIs directly from the frontend. You lose control over rate limiting, cost tracking, caching, and privacy compliance.

Semantic search is the highest-ROI AI feature for content-heavy SaaS products. pgvector on Postgres handles it for free at early scale. Pinecone starts at $70/month for production workloads.

Set per-user rate limits and API spending caps before going live. Without controls, a single automated user can generate thousands of dollars in API costs in a weekend.

Use structured JSON output from AI APIs. Parsing free-form text adds weeks of edge-case handling. Structured output gives you typed data you can use directly.

Before sending customer data to any AI API, complete a privacy review. Verify your provider's data processing agreement covers your compliance requirements — especially for GDPR or HIPAA-regulated data.

Frequently Asked Questions

Can I add AI features to my SaaS without machine learning experience?

Yes — and this describes the vast majority of SaaS teams that ship AI features in 2026. Modern AI APIs like Claude, GPT-4o, and Gemini are HTTP endpoints with JSON inputs and outputs. If your team can call a REST API and handle JSON, they can build AI features. The ML expertise you'd need for custom model training is simply not required when you're using pre-trained foundation models through an API.

How much does it cost to add AI features to a SaaS product?

For API-based AI features, costs range from $0.001 to $0.15 per operation depending on the model and task complexity. A feature generating 10,000 AI operations per month typically costs $10–$500 in API fees at those rates. The bigger cost is developer time — 1–3 weeks for most standard features. Custom model training is a different budget entirely: $50,000+ before you have anything production-ready.

Which AI API is best for adding features to a SaaS product?

Claude (Anthropic) leads for document processing, long-form analysis, and tasks requiring careful reasoning — its 200K token context window handles large documents that GPT-4o cannot. GPT-4o leads for image analysis, multimodal tasks, and the broadest ecosystem of third-party tooling. Gemini is competitive on cost and excels at structured data tasks. Most teams use Claude for reasoning-heavy features and OpenAI for image or audio tasks.

What is the best way to add semantic search to a SaaS product?

If you're already on Supabase or Postgres, enable pgvector and store embeddings alongside your content. It's free and handles most early-scale workloads. Generate embeddings using OpenAI's text-embedding-3-small at $0.00002 per 1,000 tokens. For more advanced retrieval at larger scale, Pinecone is the leading dedicated vector database at $70/month for production. Most teams should start with pgvector and migrate to Pinecone only if performance requires it.

How do I prevent AI API costs from getting out of control?

Three controls: per-user rate limits in your application code, spending caps set at the API provider level (OpenAI, Anthropic, and Google all offer this), and cost tracking per customer in your database. Know your AI cost per customer per month. If any customer's AI costs exceed what they pay you, either limit their usage or price the feature accordingly. Treat AI API cost as a line item in your COGS — not as a fixed infrastructure expense.

Is RAG (Retrieval Augmented Generation) hard to build for a SaaS product?

Less hard than the acronym suggests. RAG is three steps: embed your documents and store the vectors, convert the user's query to an embedding and find similar documents, then pass those documents as context to an LLM and return its answer. A developer who has worked with embeddings and vector databases before can build a working RAG pipeline in 1–2 weeks. LangChain and LlamaIndex provide pre-built abstractions if you want to move faster at the cost of some flexibility.

What are the compliance risks of using AI APIs in a SaaS product?

The primary risks are data privacy (sending customer data to third-party AI providers without adequate legal agreements) and output accuracy (displaying AI-generated content that users rely on for decisions). For GDPR, you need a data processing agreement with your AI provider and must disclose AI processing in your privacy policy. For HIPAA, most commercial AI APIs are not covered entities and require specific BAAs. For accuracy-critical features, add human review steps or confidence thresholds below which results are flagged rather than shown.

How long does it take to add AI features to an existing SaaS product?

Simple text-based features — content generation, summarisation, classification — take 2–7 days for a developer with API experience. Semantic search or RAG pipelines take 1–2 weeks. More complex features like AI agents, multi-step reasoning workflows, or custom fine-tuning take 3–8 weeks. The biggest time variables are prompt engineering (getting reliable output quality), data pipeline work, and UI design for displaying AI results in a way users understand.

Need a Developer Who Knows AI APIs, Not Just Buzzwords?

Devshire.ai matches SaaS teams with pre-vetted developers who have shipped real AI features — Claude API integrations, semantic search, RAG pipelines, and structured output workflows. Skip the ramp time. Shortlist in 48 hours.

Find Your AI-Native Developer ->

Claude + OpenAI vetted · SaaS AI specialists · Shortlist in 48 hrs · Median hire in 11 days

Related reading: Best Tech Stack for Startups in 2026 · Automate Your Startup Backend With AI and Node.js · API-First Development for SaaS

Stats source: [EXTERNAL LINK: OpenAI API pricing and usage data → platform.openai.com/pricing]

Related image: Claude API documentation diagram — anthropic.com/docs

Related video: "Building AI Features Into Your SaaS" — Fireship YouTube channel (1M+ subscribers)

Devshire Team

San Francisco · Responds in <2 hours

Hire your first AI developer — this week

Book a free 30-minute call. We'll match you with the right developer for your project and get you started within 24 hours.

<24h

Time to hire

3×

Faster builds

40%

Cost saved